I’m a member of SMPTE, and as such I receive the SMPTE Journal fairly regularly. The latest issue contains an article that’s backed by the best intentions but, in the long run, poses an unintended threat to the film industry.

I’m a member of SMPTE, and as such I receive the SMPTE Journal fairly regularly. The latest issue contains an article that’s backed by the best intentions but, in the long run, poses an unintended threat to the film industry.

The technical paper, entitled “Toward a Standard Model of HD Cameras,” details a very interesting process by which the authors attempt to find consistencies in how modern HD cameras see color. The goal is to come up with some sort of software “model” that can predict how HD cameras will respond to various types of broken spectrum lights, such as LEDs. This information could give lighting manufacturers a target to shoot for when developing LED lighting for the motion picture industry, and save us all a lot of grief and testing when a new LED light comes out.

The paper details how the authors profiled 12 cameras using a very ingenious method: they showed each camera a series of colored filters that passed very narrow (5nm) wedges of the visible spectrum light, and they turned the results into a series of charts that display very clearly how each camera sees red, green and blue across the visible spectrum. This could be hugely valuable except for one thing:

They only profiled three-sensor cameras.

A three-sensor camera has three sensors positioned around a prism block, and that prism takes in the light that passes through the lens and splits it into its red, green and blue components. The dichroic filter in front of each sensor can be very finely tuned to pass a specific range of wavelengths, and each pixel in the final image has full red, green and blue color information.

This is not true of single-sensor cameras. Not only are dichroic filters not feasible for use on tiny photo sites, but there’s a limited number of materials that will both pass the proper wavelengths of light while also adhering to the silicon surface of the sensor. This results in very different colorimetry for single-sensor cameras when compared to three-sensor cameras.

As if that wasn’t enough, most three-sensor cameras are limited to Rec 709 colorimetry as it is very expensive to make a wide color gamut prism block, or so I’ve been told by one large camera manufacturer. All single-sensor cameras seem capable of capturing some sort of wide gamut color, so it’s clear that the dye filters on the surface of a single-sensor camera are very different to the dichroic filters in three-sensor cameras.

For about a decade one of my key clients was a health insurance provider, so I shot inside a lot of fluorescent-lit hospitals. When we used three-sensor cameras (Sony DXC-D30, Panasonic SDX-900 and HDX-900) I was able to set my white balance to tungsten preset and supplement ambient light with tungsten units, as the camera saw light from warm white fluorescents and tungsten lamps as being roughly equal. Single-sensor cameras, however, see the green spike in fluorescents as clearly as film does. When I shot inside a hospital last year for another client using a Sony F3 I had to add 1/2 plus green to all my tungsten lights and then white balance to them in order to make all the background fluorescents appear neutral. (This is true of all other single-sensor cameras that I’ve shot with.)

This works well until someone turns on a non-gelled tungsten light, or opens a window and lets daylight spill in, at which point that extra light turns magenta. (Magenta is simply the absence of green: when I gel my foreground lights with plus green gel and white balance to them, every other “normal” [non-fluorescent and non-gelled] source of light suddenly has too little green and looks magenta, or purple, by comparison.)

This isn’t a simple color reproduction issue; all manufacturers have their own “secret sauce” for making what they consider beautiful color, and all cameras look a bit different even though they all hit the same specs–more or less. Rather, it’s a color sensing issue: prism cameras don’t see the part of the spectrum where the fluorescent green spike occurs, or they don’t see it very well, while single-sensor cameras, with their broader color gamut, see it all too well.

Every single-sensor camera I’ve worked with reproduces color very differently to a three-sensor camera. Some of this is artistic choice, some of it is complex math, and some of it may be necessity. For example, Canon cameras see the red patch on a Chroma Du Monde Rec 709 color chart as being slightly orange, as do RED cameras. The Sony F5 and F55 did the same before a recent firmware update. Arri Alexa is the only single-sensor camera that has put that red patch directly on its vector from the start.

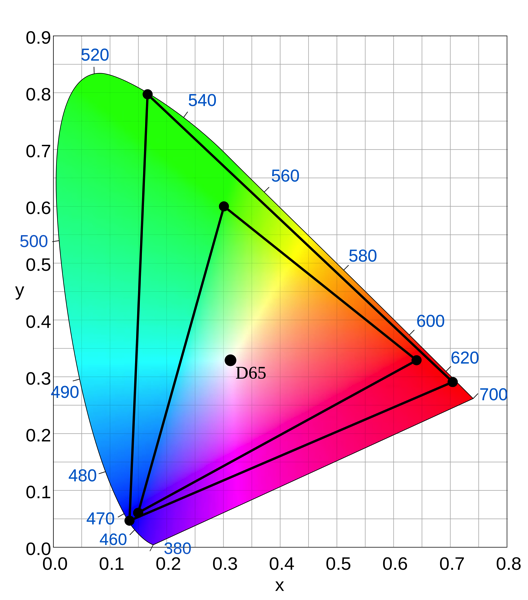

I don’t know why red is so hard to hit for some of these cameras. In the case of Canon it could be that they are sacrificing red to make flesh tones look gorgeous, which is something they excel at. Or it could mean that the “red” filter on the color filter array passes a little more green than other cameras. Or, if you look at this chart of the Rec 709 and Rec 2020 (quad hd) color spaces you’ll see that Rec 709 red actually is more orange than Rec 2020 red:

Maybe this is where red falls in Rec 709 mode when you translate that color from a wider color space like Sony S-Gamut. It may be mathematically correct, but it may not be “aesthetically correct.” We all know what flesh tone looks like as it’s burned into a very primitive part of our brain, but how many red objects in the world do we only identify as pure red? Not many, if any. Cheating red makes perfect sense if your goal is beautiful flesh tone.

Also, not every camera captures the same wide gamut. For example, the Sony F3 and the F5 share the same color filter array and capture an extended color gamut in log mode, but the F55 and F65 capture a broader color gamut due to their very different color filter array. Both offer extended gamuts, but only the F55/F65 offer full Sony S-Gamut.

For whatever reason, I think it’s nearly impossible to come up with a “standard model” of an HD camera if you don’t test every type of HD model on the market. This doesn’t necessarily mean every model of camera, but testing at least one Canon professional camera (C100/C300/C500) should give a good idea of what Canon are doing with color, as would testing the F55/F65 and F5/F3 Sony cameras, the Arri Alexa, and a RED Epic. And heck, test a Black Magic camera and a GoPro while you’re at it.

I’ve done a lot of color testing, and I’ve done a lot of consulting on LED lights. When I shot the Cineo Lighting/PRG LED light test I specifically chose to shoot with an Arri Alexa, both because it is a single sensor camera with broader color response than three-sensor cameras but also because it has the most accurate color I’ve seen in a single-sensor camera. This test, though, would have looked different on other cameras: probably not dramatically different, but subtly different. For example, there are some lights in the test that look a little too red, and when I did an informal comparison using two three-sensor cameras manufactured by two other companies I saw two slightly different results. (One saw a little extra red and one looked perfectly normal.)

I helped design one of the lights in the test, the Gekko Kelvin Tile, for the original developer, Element Labs, and at the time we used a Sony F900 for our color science reference. Everything we did to make that light look great we did in relation to how it looked on an F900. Now, on a single-sensor camera, it looks very different to what we saw on an F900, which is much more forgiving of spectral gaps and spikes.

In particular, single-sensor cameras tend to be a bit more sensitive to far red than prism cameras. Far red, or what is incorrectly but commonly known as “IR”, is red just at the edge of human vision. We need some far red to make flesh tone look pretty; without it skin looks a bit dull and gray. Prism cameras seem to let just enough through and cut off the excess, while single-sensor cameras roll it off a bit more gently, which can result in odd color shifts when using dense filters such as NDs (unless they are IRND filters, all other filters and gels affect visible light quite well but tend to have little to no effect on far red and true IR).

I think that the experiment described in the SMPTE magazine article is really interesting, but it leaves out an entire class of camera that has taken over where film used to reign. As long as this “standard HD camera” model only covers live sports, news, talk shows and soap operas–the kind of programming most often shot by three-sensor cameras–then it should work fine, but it won’t model any of the cameras that are commonly used to shoot features, episodic television shows or commercials. The authors could state that they are making a “standard model of three-sensor HD cameras,” but the distinction may be lost on all but the most technical readers. That means that someone somewhere will eventually use a light designed to the “standard HD model” with a standard single-sensor camera and discover that all is not as it should be. And that could cost someone a lot of money.

Disclosure: I have worked as a paid consultant to DSC Labs, PRG and Element Labs, and as an unpaid consultant to Sony, Canon and Arri.

About the Author

Director of photography Art Adams knew he wanted to look through cameras for a living at the age of 12. After spending his teenage years shooting short films on 8mm film he ventured to Los Angeles where he earned a degree in film production and then worked on feature films, TV series, commercials and music videos as a camera assistant, operator, and DP.

Art now lives in his native San Francisco Bay Area where he shoots commercials, visual effects, virals, web banners, mobile, interactive and special venue projects. He is a regular consultant to, and trainer for, DSC Labs, and has periodically consulted for Sony, Arri, Element Labs, PRG, Aastro and Cineo Lighting. His writing has appeared in HD Video Pro, American Cinematographer, Australian Cinematographer, Camera Operator Magazine and ProVideo Coalition. He is a current member of SMPTE and the International Cinematographers Guild, and a past active member of the SOC.

Art Adams

Director of Photography

www.artadamsdp.com

Twitter: @artadams